토토사이트 월드토토

완벽한 검증으로 더 이상 피해받는 유저가 없도록

월드 NO.1 먹튀검증 월드토토에 오신걸 환영합니다.

토토사이트 먹튀검증이 필요한 이유

수 많은 토토사이트들이 자신들이 메이저사이트라고 광고하고 있지만 유저들과 먹튀검증 커뮤니티들에게 메이저사이트로 인정받은 사설토토는 그 숫자가 얼마되지 않으며 자신들이 메이저사이트라고 하는 사설토토들 대부분이 유저들의 자산을 갈취하는 비양심적인 먹튀사이트들 입니다. 이들은 주기적으로 주소와 이름을 바꾸며 홈페이지를 리뉴얼하고 기존에 오랜기간 운영되어 오던 기존의 메이저사이트를 표방하며 각종 이벤트와 꽁머니로 유저들을 현혹시켜 끝없이 새로운 먹튀 피해자들을 양산하고 있습니다. 단순 유저의 입장에서는 내가 이용하고자 하는 사설토토가 믿을 수 있는 안전놀이터 또는 메이저사이트인지 정확히 구분하는것이 현실적으로 불가능에 가깝습니다. 이에 저희 월드토토는 유저분들이 안심하고 믿을 수 있는 토토사이트를 이용하실 수 있도록 엄격하고 치밀한 먹튀검증 시스템을 통해 안전하고 믿을 수 있는 국내 최고의 토토사이트만을 엄선하여 추천드리고 있습니다. 또한 각 안전놀이터로 부터 보증금을 예치받아 예기치못한 사고로부터 유저님들의 자산을 안전하게 지켜드리고 있으니 저희 월드토토가 추천드리는 안전놀이터들을 믿고 이용하시기 바랍니다.

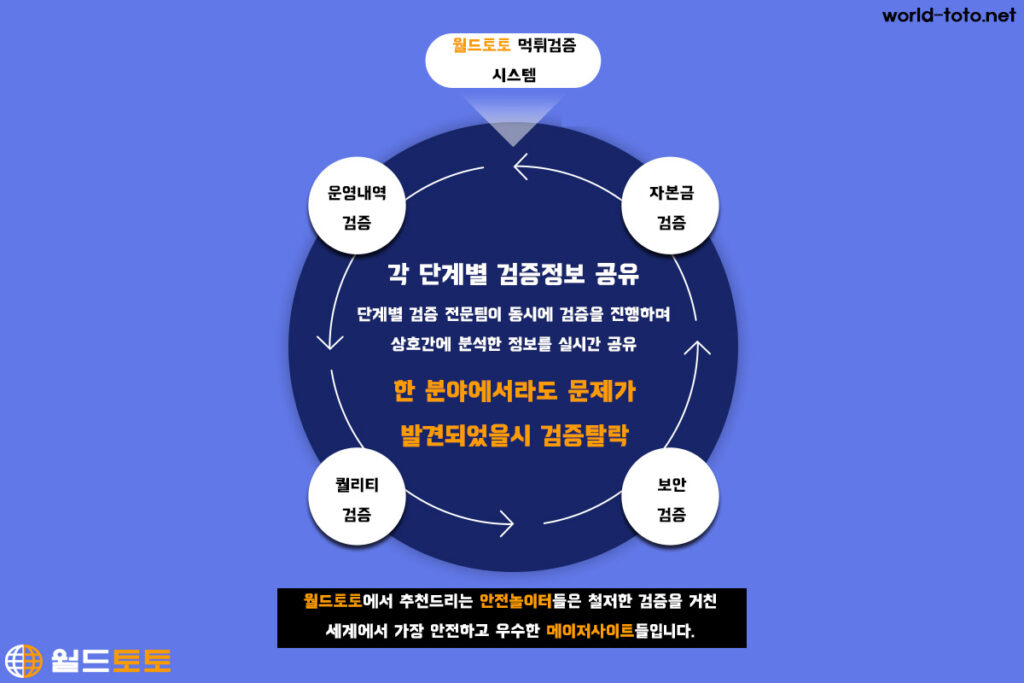

토토사이트 먹튀검증과정

월드토토 운영진들은 국내 사설토토 업계에서 운영 및 개발 등의 다양한 분야에서 여러 경험과 인맥을 쌓아온 사설토토의 베테랑들로써 일부 비양심적인 먹튀업체로 인해 추락한 사설토토에 대한 유저들의 신뢰복구와 사설토토 시장에 만연하는 먹튀사고 근절을 위해 최선을 다하고 있습니다. 저희들은 월드토토 이외에도 여러 먹튀검증사이트를 운영하고 있으며 국내에 내로라 하는 사설토토 유저 커뮤니티들과도 먹튀업체 정보를 공유하며 공동대응라인을 구축하여 효율적으로 대응하고 있습니다. 월드토토의 운영진들은 대한민국 모든 사설토토의 안전놀이터화를 꿈꾸며 운영진 각자의 전문분야에 대한 심도있는 먹튀검증을 진행하고 있으며 모든 검증을 아무런 문제없이 통과한 사설토토만을 유저분들에게 믿고 이용하실 수 있는 안전놀이터로 소개해드리고 있습니다. 저희가 먹튀검증시 어떤 부분들을 중점적으로 검증하는지에 대해 간단하게 설명드리겠습니다.

운영내역 검증

사설토토 업체가 먹튀를 주기적으로 하게 되면 알게모르게 자신들의 정보를 조금씩이라도 흘리게 되있습니다. 유저의 입장에서는 먹튀업체가 도메인과 홈페이지 디자인을 교체하고 새로 오픈을 하게되면 이전에 먹튀를 진행한 곳인지 확인 할 방법이 없지만. 이들은 눈으로 당장 확인 가능한 부분들에 대해서만 대충 수정하고 새로 오픈하기 때문에 홈페이지 소스코드, 도메인 정보, 서버 호스팅 업체 및 위치, 머니충전 계좌 등 기존 자신들이 운영하는 방식이나 특성을 쉽게 바꾸지는 못합니다. 월드토토는 현재 서비스되고 있는 모든 사설토토들에 대해 분석 및 정보수집을 진행하며 이 정보들을 데이터베이스화 하여 먹튀이력 추적에 활용하고 있습니다. 또한 이들은 자신들의 사이트를 아무런 검증없이 돈만주면 안전놀이터로 추천해주는 일부 비양심적인 먹튀검증 사이트들에게 새로 오픈한 사설토토를 그대로 광고하기 때문에 사설토토 업계에서 잔뼈가 굵은 월드토토 운영진의 검증망에 포착될 수 밖에 없습니다. 저희 월드토토에서는 사설토토 검증시 가장 먼저 해당 사설토토 운영진이 이전에 다른 이름으로 사설토토를 운영한 이력이 있는지에 대해 추적을 시작하며 만약 이전 운영내력이 있다면 먹튀사고 여부와 유저들의 여론이 어떠했는지 등에 대해 심도있는 분석과 검증을 진행합니다.

자본금 검증

너무 당연한 말이지만 사설토토 업체들이 먹튀사고를 일으키는 가장 큰 이유는 ‘돈’ 입니다. 단순히 유저들을 돈을 갈취하여 자신들을 배를 불리고자 하는 이유도 있지만 부족한 자본금으로 외줄타기 하듯이 아슬아슬하게 운영하다 유저가 고액의 환전요청을 하게 되었을시 이를 감당하지 못하고 온갖 핑계를 대며 출금을 미루다가 결과적으로 먹튀를 해버리는 사례도 많이 있습니다. 자본금이 일정 수준이상 준비된 사설토토의 경우는 당장의 고액 출금으로 인한 금전적인 손실 보다는 유저들과의 신뢰와 자신들의 평판을 더 크게 생각하기 때문에 절대 먹튀사고를 일으키지 않습니다. 먹튀를 하게되면 당장은 본인들에게 이득 일 수는 있어도 장기적으로 봤을때는 유저들의 평판하락과 먹튀업체로 등록됨으로 인한 유저유입 감소로 인해 더 큰 피혜를 야기시키기 때문입니다. 사설토토 먹튀검증시 해당 업체의 자본금이 얼만큼 갖추어져 있는지에 대한 검증이 진행되며 출금요청시 신속하게 문제없이 진행되는지에 대해서도 평가를 진행하고 있으며 최종 단계까지 모든 검증이 통과된 사설토토의 경우 일정량 이상의 보증금을 저희 월드토토에 예치할것을 요구하고 이를 이행하는 사설토토들을 최종적으로 안전놀이터로 유저분들에게 추천드리고 있습니다.

퀄리티 검증

사설토토의 본연의 목표는 배팅의 재미를 제공해야 하는것입니다. 아무리 안전놀이터라 하여도 그곳에 놀거리가 제대로 없고 이용하는데 조금이라도 불편한점이 있다면 과연 제대로된 안전놀이터라고 할 수 있을까요? 월드토토의 안전놀이터 검증과정은 단순히 해당 사설토토가 먹튀사고로부터 안전한 곳인지에 대한 검증 뿐만 아니라 유저분들이 원활히 접속이 가능하고 즐겁게 이용이 가능한지에 대해서도 검증을 진행하는 종합사설토토 평가 및 검증 과정이라고 생각하시면 됩니다. 먹튀검증시 해당 사설토토가 유저분들에게 제공하는 서비스의 퀄리티에 대해 전반적으로 평가를 진행합니다. 제공하는 게임이 다양한지, 만약 오픈소스게임이라면 최신버전을 이용하고 있으며 형평성에 문제있는 버전은 아닌지 등에 대한 평가부터 토토사이트의 전반적인 인터페이스와 디자인 및 시안성 그리고 동시 접속자가 많더라도 원할한 접속 환경을 제공해 줄 수 있는서버환경을 구축하고 있는가? 등 전반적인 서비스 제공 환경에 대한 심도있는 검증을 진행합니다.

보안 검증

유저분들이 사설토토를 이용하시면서 먹튀보다 더 큰 피해를 야기시킬수 있는 사고는 개인정보 유출사고 입니다. 먹튀사고로부터 안전하다고만 해서 안전한 것이 절대 아닙니다. 보안이 취약한 토토사이트를 해킹하여 회원정보를 유출시키고 이를 보이스피싱과 같은 범죄에 약용하는 사례가 날로 늘어가고 있으며 평소같으면 속지 않을 보이스피싱과 같은 범죄도 사설토토 회원이라는 특수성으로 인해 피해를 당하시는 분들이 많이 있습니다. 저희 서울토토에서는 먹튀검증 과정의 가장 마지막으로 해당 사설토토의 정보보안 수준에 대한 검증을 진행합니다. 단순히 웹사이트 취약점 스캐너와 같은 프로그램을 통한 수박 겉핥기식 취약점 진단이 아닌 실력있는 해커를 통해 해당 사설토토의 회원DB에 접근이 가능한지에 대해 실제로 해킹을 진행하는 방식의 실전 검증을 진행하고 있으며 DDOS와 같이 회원정보 유출 목적이 아닌 토토사이트 서버를 마비시켜 유저분들의 토토사이트 이용을 방해하는 공격에 대한 대응 수준 검증도 진행하고 있습니다.

안전놀이터를 이용하지 않을 시 발생하는 문제점

온라인환경에서만 서비스가 제공되고 있는 사설토토의 특성상 믿고 이용 할 수 있는 안전한 토토사이트를 찾는 것은 사설토토를 이용하는데 있어서 가장 중요한 문제입니다. 하루에도 수없이 많은 먹튀사고가 일어나고 있으며 피해를 당한 유저분들은 아무런 대응도 하지 못하고 손놓고 당하고 있을 수 밖에 없습니다. 유저분들 개인의 힘만으로는 이런 먹튀사고를 예방하거나 사고 발생 시 대처하기는 너무 역부족입니다. 월드토토는 엄격한 먹튀검증을 통해 안전하고 믿을 수 있는 사설토토 안전놀이터만을 유저분들에게 추천드리고 있으며 혹시 모를 먹튀사고에 대비하고자 보증금 제도를 운영하며 혹여 저희가 추천드린 안전놀이터를 이용하시다가 금전적인 피해를 입으시게 되셨을 경우 보상을 하기 위한 만만의 준비도 하고 있습니다. 또한 먹튀문제 이외에도 사설토토 운영진과의 마찰이 발생하였을 시 원활한 해결을 위해 고객님을 대신해 대응 및 중재를 해드리고 있습니다. 부디 저희가 추천드리는 안전놀이터만을 이용하셔서 안전하게 사설토토를 즐기시길 바랍니다.

먹튀로 인한 금전적인 피해

토토사이트를 이용하시는 분들이 가장 경계하는 것은 먹튀입니다. 그 이유는 유저분들에게 직접적인 금전피해를 야기하는 치명적인 사고임과 동시에 워낙 그 빈도가 잦은 사고이기 때문입니다. 사설토토를 오랜기간 이용하신 분들이라면 피해금액의 크기를 떠나 먹튀를 한번쯤은 당해보신 분들이 대부분이실 겁니다. 배팅의 즐거움은 단순히 나의 예상이나 추측이 맞아들어가는 것에만 있는것이 아닙니다. 배팅의 진정한 즐거움은 나의 승리에 대한 금전적 보상이 따라옴에 있기 때문입니다. 금전적인 보상이 없다면 아무것도 아닙니다. 더군다나 내 돈을 입금해서 게임머니로 환전해서 내가 열심히 분석하여 게임에서 이겨서 불린 게임머니를 다시 현금으로 환전할수 없다면 그런 헛수고가 또 어디있겠습니까 그 허탈함과 동시에 아무런 대응도 못하고 일방적으로 당할 수 밖에 없다는 무기력감과 분노는 먹튀를 당해보지 않으신 분들은 상상조차 할 수 없을 정도입니다. 사설토토는 게임을 즐기고 돈을 벌기 위해 이용하는것입니다. 배팅 그 본연의 재미를 찾기 위해서는 안전하고 믿을 수 있는 안전놀이터를 이용해야 합니다.

개인정보유출로 인한 피해

보안이 취약한 토토사이트를 통해 유출된 개인정보가 보이스피싱을 비롯한 여러 범죄에 악용되고 있습니다. 대부분의 사설토토같은 경우 자신들에게 직접적인 피해가 오지않은 회원 개인정보 유출 사고는 그렇게 큰 문제로 생각하지 않고 대응도 미비하기 때문에 생각보다 많은 토토사이트에서 이런 피해가 발생되고 있습니다. 또한 자금력이 부실하여 자체 보안팀을 구성하거나 외부 전문가들을 통해 보안시스템 구축을 진행할 능력이 되지않는 사설토토들이 대부분입니다. 어느정도 규모가 있고 자금력이 안정적인 메이저사이트들은 경쟁 사설토토간의 해킹공격을 통한 서비스 방해가 워낙 심해 이를 막기위해서라도 자체 보안팀을 운영하며 주기적인 보안패치와 보안관제를 통해 토토사이트 보안을 철저히 하기때문에 소형사설 토토 보다는 메이저사이트를 이용하시는것이 자신의 개인정보를 지키는 방법중 하나입니다. 가장 좋은 방법은 실제 해킹을 통해 심도있는 보안검증을 진행하고 이를 통과한 믿을 수 있는 월드토토 추천 안전놀이터를 사용하는것이 가장 확실하게 내 개인정보를 지키는 방법입니다.

분쟁 발생시 대응의 어려움

먹튀검증 사이트에서 추천해드리는 안전놀이터를 이용하실때 먹튀검증 사이트 별로 제공해드리는 가입코드가 다른것을 확인 하실 수 있으실 겁니다. 이는 사설토토 운영진들의 입장에서는 가입하는 유저가 어느 먹튀검증 사이트를 통해 가입을 한 것인지 나타내주는 것으로 일반적으로 사설토토를 이용하시는데 있어서 그 차이점은 느끼실 수 없으시지만 토토사이트 운영진 측과의 분쟁이 발생하였을 시 가입코드에 따라 상황이 달라지실 수 있습니다. 일단 사설토토 운영진 측에서는 분쟁이 발생한 유저가 유저들 유입이 많고 사설토토 업계에서 유명한 먹튀검증 사이트 출신인 경우와 듣도보도 못한 먹튀검증 사이트 출신일 경우에 각 상활별로 대응이 달라질 수 밖에 없습니다. 왜냐하면 유저유입이 많은 먹튀검증 사이트 유저일 경우 유저가 먹튀검증 사이트측에 불만제기를 할 경우 일이 복잡해질수 있기 때문에 왠만한 큰 일이 아니면 운영진 쪽에서는 되도록 유저의 요구에 따라 주는 경우가 많습니다. 혹여 그래도 분쟁의 해결이 어려운 경우 먹튀검증 운영진 측에 분쟁해결 또는 중재를 요청하시면 먹튀검증 운영진이 유저를 대신해 사설토토 운영진측과 분쟁의 해결에 나서기 때문에 유저가 개인으로 분쟁에 대응하는것 보다는 훨씬 원활하고 수월하게 분쟁해결이 가능하기에 저희가 추천드리는 안전놀이터를 이용하실것을 권해드립니다.

토토사이트 이용 TIP

유저 개인이 본인이 이용하고자 하는 토토사이트의 먹튀사고 여부와 먹튀사고를 일으킬 가능성에 대해 정확한 진단을 내리는것은 쉽지 않은 일입니다만 메이저사이트에 비해 과도한 혜택과 꽁머니를 제공하는 사설토토를 피하는 것만으로도 먹튀사고 가능성을 크게 줄일 수 있습니다. 이렇게 유저 개인의 입장에서 먹튀사이트를 피할 수 있는 방법 이외에도 사설토토를 이용하는데 있어서 필수적으로 알아두셔야할 몇가지 팁에 대해 설명을 드리고자합니다.

과도한 꽁머니는 독

먹튀를 주기적으로 자행하는 사설토토의 경우 파격적인 이벤트와 각종 꽁머니 혜택으로 단기간에 유저들을 끌어모아 자금을 확보한 뒤 잠수를 타고 새로운 토토사이트를 오픈하는 방식을 반복하며 수많은 피해자들을 양산하고 있습니다. 오랜 기간동안 사고없이 운영된 메이저사이트를 이용하지 않고 이렇게 먹튀 가능성이 높은 신규 소형 사설토토를 먹튀 위험을 감수하고도 많은 유저분들이 이용하시는 이유는 메이저사이트에 비해 높은 배당과 각종 파격적인 꽁머니혜택 때문인 경우가 대부분입니다. 배팅에서 가장 금기시 되는 행동은 당장의 작은 이익에 눈이 멀어 보상에 비해 과도한 위험을 감수하는 행동입니다. 기본적으로 배팅이 하이리스크 하이리턴 법칙을 따르지만 리스크관리는 성공적인 배팅을 하는데 필수적이며 이것은 배팅을 할 사설토토를 고르는데 있어서도 적용되어야 합니다. 튼튼한 자금력을 자랑하는 메이저사이트 보다도 과도한 꽁머니 혜택을 제공하는 소형 사설토토는 먹튀 사이트일 가능성이 매우 높으므로 이용을 삼가하시기 바랍니다.

규정확인은 필수

사설토토를 이용하시는데 있어서 사설토토에서 공지하는 규정을 제대로 확인 하시는 유저분들은 거의 없다시피 합니다. 이는 대부분의 사설토토들의 규정이 다 거기서 거기일것이라고 안일하게 생각하기 때문인데 이것은 매우 위험한 생각입니다. 저희 고객센터로 도움을 요청하시는 유저들 대부분이 먹튀로 인한 피해보다는 이용하시는 토토사이트의 규정을 제대로 확인하지 않으시고 이용하시다가 규정위반으로 인한 몰수 또는 출금금지 처분을 받으신 분들 도움을 요청하십니다. 해당 규정이 유저에게 불합리한 규정이 아니라 합당한 규정이라고 여겨질 경우 저희가 도움을 드릴 수 있는 방법은 없습니다. 토토사이트 간의 규정은 모두 제각각 이기 때문에 사설토토를 가입하셨다면 바로 머니를 충전해서 게임을 진행하지 마시고 가장 먼저 꼭 모든 규정을 확인 및 숙지하시고 토토사이트를 이용하시기 바랍니다. 가끔 일부 비양심적인 사설토토 같은 경우 유저들에게 매우 불합리한 규정을 넣어놓는 경우도 있으니 새로 가입한 사설토토의 규정을 확인 할때는 잘 알려져있는 메이저사이트 또는 안전놀이터의 규정과 비교하시면서 확인하시는것도 좋은 방법입니다.

가입코드의 중요성

먹튀검증 사이트에서 제공해드리는 안전놀이터 가입코드는 사이트 별로 제각각 다릅니다. 이는 사설토토 가입시 해당유저가 어느 먹튀검증 사이트의 추천을 통해 가입한지 구분짓기 위함으로 유저분들은 가입코드에 큰 의미를 두지 않고 가입하시겠지만 실질적으로 이는 매우 큰 영향을 미치는 요소입니다. 내가 어느 먹튀검증 사이트를 통해서 가입을 했냐는 것은 쉽게말하면 하나의 신분처럼 작용되며 저희 월드토토와 같이 규모가 크고 사용유저들이 많은 먹튀검증 사이트 출신은 그에 맞는 높은 신분을 가지게 되신다고 이해하시면 됩니다. 신분이 높다고 해서 토토사이트를 이용하시는데 있어서 특별한 메리트가 있는것은 아니지만 문제가 발생하게 되었을때 운영진의 대우가 달라지시게 됩니다. 아무래도 사설토토 운영진 측에서 생각해보면 소형 먹튀사이트 출신 유저분보다는 대형 먹튀사이트 출신 유저분들을 대하는게 어려워질 수 밖에 없습니다. 또한 사설토토 운영진과 분쟁이 발생하였을 시 내 가입코드를 제공해준 먹튀사이트 운영진에게만 도움을 청할 수 있기 때문에 가입코드를 신중하게 선택하셔야 합니다. 저희 월드토토 가입코드를 이용하셔서 가입하신 유저분들은 저희가 끝까지 책임지고 도와드립니다 저희를 믿고 마음편하게 토토사이트를 이용하시기 바랍니다.

먹튀검증 NO.1 월드토토의 약속

대부분의 먹튀검증 사이트들은 제대로된 검증도 없이 단순히 돈만주면 안전놀이터로 추천을 해주는 배너광고 사이트들입니다. 너도 나도 먹튀검증 전문을 표방하지만 실질적으로 먹튀검증을 제대로 진행하는 곳은 몇 없습니다. 저희 월드토토는 오랜기간동안 사설토토 시장에서 활동해온 전문 운영진들이 운영하는 국내 최고의 먹튀검증 커뮤니티라고 자부할 수 있습니다. 월드토토 이외에도 다수의 토토사이트 먹튀검증 , 카지노사이트 먹튀검증 커뮤니티를 운영중에 있으며 가장 크고 정확한 먹튀사이트 빅데이터를 구축하고 있습니다. 이 빅데이터를 기반으로 정확하고 심도있는 먹튀검증을 진행하며 정말 믿고 이용할 수 있는 사설토토만을 엄선 또 엄선하여 유저분들에게 안전놀이터로 자신있게 추천드리고 있습니다. 또한 혹시 모를 불상사를 대비하여 각 안전놀이터 업체들에게서 일정금액 이상의 보증금을 예치받아 불합리하게 피해를 당하신 분들의 손실을 100% 보상해드리기 위해 철저히 준비하고 있습니다. 혹여 저희가 추천드린 안전놀이터를 이용하시다가 문제가 생기셨을 경우 언제든지 저희 텔레그램 고객센터 @worldtoto로 연락 주시기 바랍니다. 최선을 다해 고객님의 편에서 고객님을 대변해드리도록 하겠습니다.